New technologies have the potential to greatly simplify the lives of humans, including those of blind individuals. One of the most promising types of tools designed to assist the blind are visual prostheses.

Visual prostheses are medical devices that can be implanted in the brain. These devices could help to restore vision in people affected by different types of blindness. Despite their huge potential, most existing visual prostheses achieved unimpressive results, as the vision they can produce is extremely rudimentary.

A team of researchers a University of California, Santa Barbara recently developed a machine learning model that could significantly enhance the performance of visual prostheses, as well as other sensory neuroprostheses (i.e., devices aimed at restoring lost sensory functions or augmenting human abilities). The model they developed, presented in a paper pre-published on arXiv, is based on the use of a neural autoencoder, a brain-inspired architecture that can discover specific patterns in data and create representations of them.

“We started working on this project in an attempt to solve the long-standing problem of stimulus optimization in visual prostheses,” Jacob Granley, one of the researchers who carried out the study, told TechXplore. “One of the likely causes for the poor results achieved by visual prostheses is the naive stimulus encoding strategy that devices conventionally use. Previous works have suggested encoding strategies, but many are unrealistic, and none have given a general solution that could work across implants and patients.”

The main objective of the recent work by Granley and his colleagues was to devise a simple and effective solution that could help to improve the encoding strategies of sensory neuroprostheses. They wanted this strategy to attain good results with different types of sensory data, as this would make it easy to implement across a variety of neuroprosthetic devices.

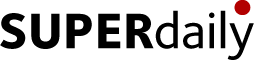

“Our main idea was to utilize a sensory model, which describes the perceptions or neural responses resulting from stimulation, in-the-loop within a deep neural network,” Granley explained. “The neural network was trained to output stimuli that, when fed through the sensory model, achieve the desired target response. Thus, the system is a hybrid autoencoder, where the encoder is a learned neural network, and the decoder is the fixed sensory model.”

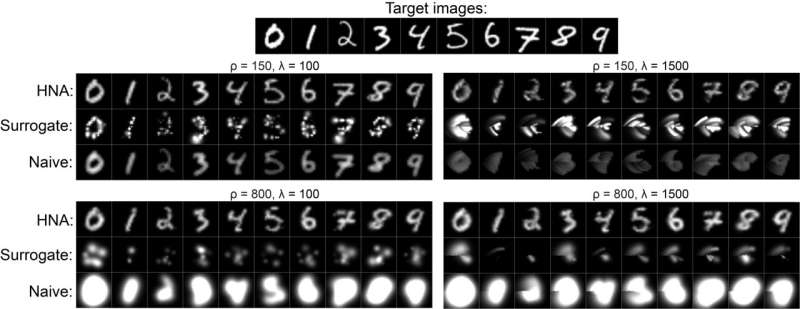

So far, the researchers evaluated the performance of their neural autoencoder-based approach in the context of visual neuroprostheses. They found that it achieved remarkable results, consistently leading to higher-quality visual perceptions across a wide range of virtual patients, which is a significant step forward in the path towards attaining reliable bionic vision.

The neural encoder created by the Granley and his colleagues generated far more convincing visual stimuli than other conventional encoding strategies, using the same training datasets. Notably, it could also easily be applied other neuroprostheses that can be described using a sensory model, including those designed to enhance the senses of hearing and touch.

“I’m excited about the potential broader impact of our framework,” Granley said. “We were able to demonstrate the benefit gained by ‘closing the loop on perception,’ or in other words, including in-the-loop a model of the effects of stimulation on the patient’s perception. This could be useful for a variety of prostheses. For example, cochlear implants could use this framework to improve auditory perceptions.”

The model introduced by this team of researchers could eventually be used by developers to improve the quality of the vision enabled by visual neuroprosthetic devices. In addition, it could be applied to existing prosthetic limbs to produce more convincing feelings of cutaneous touch in patients who are missing specific limbs or have undergone amputations.

“In this project, we only used virtual, simulated patients,” Granley added. “In the future, I would like to test our encoder on human patients with implanted visual prostheses. If we could attain the same improvement on real patients, then this would mark a huge step towards restoring vision to millions of people suffering from blindness.”

More information: Jacob Granley, Lucas Relic, Michael Beyeler, Hybrid neural autoencoder for sensory neuroprostheses and its applications in bionic vision. arXiv:2205.13623v1 [cs.LG], arxiv.org/abs/2205.13623

Journal information: arXiv

© 2022 Science X Network

Citation: A neural autoencoder to enhance sensory neuroprostheses (2022, June 21) retrieved 21 June 2022 from https://techxplore.com/news/2022-06-neural-autoencoder-sensory-neuroprostheses.html

This document is subject to copyright. Apart from any fair dealing for the purpose of private study or research, no part may be reproduced without the written permission. The content is provided for information purposes only.